Ann's Blog

Monday, April 1, 2024

Friday, March 1, 2024

Falafel

Preparation Time: 15 minutes (excluding soaking time)

Cooking Time: 15 minutes

Servings: Makes approximately 20 falafels

Ingredients:

1 cup dried chickpeas (soaked overnight, avoid canned chickpeas)

½ cup roughly chopped onion

1 cup roughly chopped parsley (about one large bunch)

1 cup roughly chopped cilantro (about one large bunch)

1 small green chile pepper (serrano or jalapeno)

3 garlic cloves

1 teaspoon cumin

1 teaspoon salt

½ teaspoon cardamom

¼ teaspoon black pepper

2 tablespoons chickpea flour (or other flour)

½ teaspoon baking soda

Oil for frying

Instructions:

- Soak Chickpeas: Place dried chickpeas in a bowl and cover with water. Allow them to soak overnight. Drain before using.

- Chop onion, parsley, cilantro, green chile pepper, and garlic cloves. In a food processor, combine soaked chickpeas, chopped onion, parsley, cilantro, green chile pepper, and garlic cloves. Pulse until finely minced.

- Add cumin, salt, cardamom, black pepper, chickpea flour, and baking soda to the mixture. Blend until well combined. Shape the mixture into small patties, about 1.5 inches in diameter.

- In a pan, heat oil for frying over medium heat. Carefully place falafel patties into the hot oil and fry until golden brown on both sides. Once fried, place falafel on a paper towel to drain excess oil.

- Serve falafel hot with your favourite sauce or in pita bread with veggies.

Pro Tips:

- Ensure chickpeas are thoroughly soaked for a smoother texture.

- Adjust spice levels by adding green chile pepper.

- Use a cookie scoop for uniform falafel patties.

- Test oil temperature by dropping a small piece of mixture; it should sizzle and float.

- Enjoy your homemade falafel!

Friday, February 16, 2024

Cookies

Shortbread Cookies

Ingredients:

- 1 cup (226g) vegan butter, softened

- 1/2 cup (100g) granulated sugar

- 2 cups (240g) all-purpose flour

- Zest of 2 lemons

- 2 tablespoons lemon juice

- 1/2 teaspoon vanilla extract

- Pinch of salt

- Powdered sugar for dusting (optional)

Instructions:

- In a large bowl, cream together softened vegan or butter of your choice and granulated sugar until light and fluffy.

- Add the lemon zest, lemon juice, and vanilla extract. Mix until well combined.

- Sift in the all-purpose flour and add a pinch of salt. Mix until the dough comes together. Be careful not to overmix.

- Divide the dough into two equal portions. Roll each portion into a log shape, about 1.5 inches (4 cm) in diameter. Wrap the logs in plastic wrap and refrigerate for at least 1-2 hours until firm.

- Preheat your oven to 350°F (180°C). Line a baking sheet with parchment paper.

- Slice the chilled dough into rounds, about 1/4 inch (0.6 cm) thick, and place them on the prepared baking sheet.

- Bake in the preheated oven for 10-12 minutes or until the edges are lightly golden.

- Allow the cookies to cool on the baking sheet for a few minutes before transferring them to a wire rack to cool completely.

- Optional: Dust the cooled cookies with powdered sugar for extra sweetness.

Thursday, January 18, 2024

Your value

This is a 1000 gram (1kg) iron bar.

Its raw value is around $100.

|

If you decide to make horseshoes, its value would increase to $250.

If, instead, you decided to make sewing needles, the value would increase to about $70,000.

If you decided to produce watch springs and gears, the value would increase to about $6 Million.

However still, if you decided to manufacture precision laser components out of it like ones used in lithography, it would be worth around $15 million.

Your value is not just what you are made of, but above all, in whichever way you can make the best of who you are.

Thursday, December 21, 2023

Wednesday, December 13, 2023

Reminder

This year was tough I said goodbye to my Nani or Ammachi in my mother tounge. I didn’t want to do that. I didn’t go for funeral it has been tough to accept she is not at home when I go next time to home. Missing you 😘

Tuesday, November 21, 2023

Monday, October 30, 2023

Monday, September 4, 2023

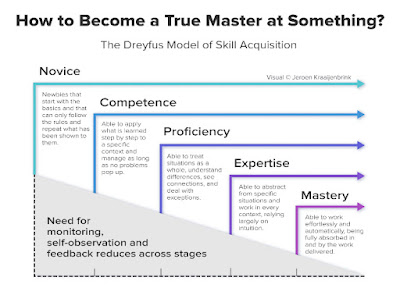

Dreyfus Model

Already in 1980, Stuart and Hubert Dreyfus wrote a phenomenal article with the title “A five stage model of the mental activities involved in directed skill acquisition.” Ever since, it is a reference model and foundation for how someone develops from novice to master.

The five stages are:

• Novice: newbies that start with the basics and that can only follow the rules and repeat what has been shown to them.

• Competence: able to apply what is learned step by step to a specific context and manage as long as no problems pop up.

• Proficiency: able to treat situations as a whole, understand differences, see connections, and deal with exceptions.

• Expertise: able to abstract from specific situations and work in every context, relying largely on intuition.

• Mastery: able to work effortlessly and automatically, being fully absorbed in and by the work delivered.

This is not just to create some sort of hierarchy or levels. The main relevance is that people learn differently at different stages.

At the Novice and Competence stages, learning needs to be rule-driven and instruction-based. Learners need a clear process and guidelines that they can understand and follow.

At the Proficiency and Expertise stages, learning is much more based on personal development and gaining experience in different circumstances.

And, at the Mastery stage, learning is entirely individual. Rather than listening to a trainer or practicing cases, at this stage the best way to learn is to figure things out yourself.

Tuesday, August 1, 2023

30 Plants A week

Many of us are already all about getting our 5-a-day of fruit and vegetables, but what’s the deal with the 30 plants a week challenge and how is this any different?

Saturday, July 1, 2023

Travel Etiquette

- Never bring smelly food

- Bring a bag you can carry

- If you have to switch seats, switch to a comparable seat

- Feel free to recline your seat, but check on the person behind you first

- Don't bother people wearing headphones

- Always wear headphones to avoid hearing loud noises

- Keep your feat off the airplane seats

- Keep your arms and legs to yourself

- Don't touch the headrests when walking through the aisle

- The armrest belongs to the person in the middle seat

- Control your children

- Keep your things in your own space

- Be kind to the cabin crew

- If you have a problem with another passenger immediately call a flight attendant

- Wait until the seatbelt sign is off to start exiting the plane

- Don't treat the plane like your living room

- Ask to skip the line if you have a connecting flight

- Keep your voice down when talking to someone

- Never crawl through anyone when in the middle or window seat

- Keep your legs out of the aisle

Thursday, June 1, 2023

Cauliflower Curry

Ingredients

- 1 tbs ground coriander

- 4 cardamom pods

- 1 cinnamon stick

- 1 tbs cumin seeds

- Curry leaves

- 1tbs of yellow and black mustard seeds

- 1 tsp chilli powder

- 1 bunch coriander

- Thumb sized piece of fresh turmeric

- Yellow split peas

- Two white onions

- 1 cauliflower

- 4 cloves of garlic

- Thumb sized piece of ginger

- Tin of coconut milk

Method

- Toast the cardamom pods, cinnamon, cumin seed, curry leaves and mustard seeds in a pan over medium heat until they become fragrant.

- Add some oil to the pan as well as the rest of the spices to toast.

- Remove the stalks from the coriander then wash and chop them finely. Grate some fresh turmeric and add it to the pan with the coriander stalks and a generous pinch of salt and pepper.

- Add the dried split peas to the pan as well as a litre of water then cover and leave to cook.

- Slice the onions and fry them in a pan with some olive oil until they have caramelised.

- While the onions are caramelising, chop the cauliflower into florets, toss with olive oil and roast until tender.

- Finely dice the garlic and ginger and add to the pan with the onions. Once they're caramelised add the contents of the pan to the pot with the split peas and spices.

- Add the coconut milk as well as the roasted cauliflower and give everything a good stir.

- Finish off the curry by mixing in the coriander leaves chopped and serve.

Wednesday, May 17, 2023

Empathy

"Empathy is about finding echoes of another person in yourself." - Mohsin Hamid

"The greatest gift you can give someone is your kindness, because it makes someone feel right at home even when they're not." - Carol Rifka Brunt

Everyone we meet is fighting a battle we know nothing about. Kindness and empathy can go a long way in making their fight a little easier.

Here are five ways we can show kindness and empathy in our professional lives:

🎯1. Listen actively: In work environments, it's common to be caught up in our own task lists and goals. However, actively listening to our colleagues' concerns, opinions, and challenges can show empathy. It can make them feel heard and valued, thus strengthening your working relationships.

🎯2. Offer support: Kindness can take many forms, and offering support is one of them. Sometimes, a colleague might be struggling with work overload, personal issues, or just feeling demotivated. Offering support, whether through a helping hand or just a kind word, can help alleviate their stress and make them feel valued.

🎯3. Avoid judgment: Empathy is about putting ourself in another person's shoes. It's important to avoid judging others based on their work style, communication style, or personal background. Instead, focus on understanding their perspectives and respecting their differences.

🎯4. Celebrate successes: Acknowledging and celebrating achievements can go a long way. Whether it's a small win or a big one, recognizing someone's success can show empathy and build a sense of community within a work environment.

Monday, May 1, 2023

Pancakes

Ingredients

- 1/3 cup whole-wheat flour

- 1 big tbs protein powder (optional)

- 1/2 tsp baking powder

- 1/2 cup oat milk

- Pinch of salt

Method

- Add all the ingredients together and stir until combined.

- Adjust the milk if needed to reach the consistency.

- Heat up a non-sticky pan over medium heat (grease the pan with just a bit of coconut oil if necessary) and drop a couple of spoons of the batter onto it.

- Let it cook for 1 minute or so until some bubbles begins to form on the surface.

- Flip over and finish cooking until golden brown, around 1-2 minutes. Repeat for all the remaining batter.

- Add your topping (examples, fresh fruits, pure maple syrup, nut butter, chocolate chips, etc.)

Tuesday, April 11, 2023

Your 16-week planner to military fitness

This 16-week fitness programme has been developed by the Army Physical Training Corps, and is based on the one that it issues to potential recruits to enable them to pass basic training. Make it to the end of level 4 (see below) and you'll have achieved the basic level of fitness required of a trained soldier ...

Before you start

To assess your current level of fitness, perform the tests and take the body measurements outlined here, and make a note of the results. These test results will also tell you how many repetitions of press-ups and sit-ups to do during the 16-week programme, by giving you your "max scores" for both. Then, at the end of each four-week level of the programme, record your new test results to monitor your fitness development.

Warming up

You should start every exercise session (including these tests) with a thorough warm-up, and always finish it with a cool-down and stretch. You can read in detail how to follow the army's recommended warm-up routines in the accompanying fitness booklet (pdf) - the first of our exclusive six-part series.

Now perform the following tests with a two-minute break between each:

Press-up max test

Do as many press-ups as you can manage in exactly two minutes - and don't worry if you need to pause for a few seconds before doing more. This figure is your "press-up max score" (see fitness Booklet 3: Upper Body, for an explanation of how to do an official British Army press-up in the army fitness app.

Sit-up max test

After resting for a couple of minutes, now do as many sit-ups as you can in exactly two minutes. Again, don't worry if you need to take a break. This figure is your "sit-up max score". (A detailed explanation of how to do an army sit-up, plus variations, is given in Booklet 5: The Core - Abs and Back, available to download here from January 9).

1.5-mile run test

Next, time yourself running 1.5miles (2.4km). If you can't run the whole way, walk where necessary. You can use an athletics track (1.5 miles is six laps) or the milometer in your car to measure the route. Don't worry if it's not exact - just so long as you use the same route next time, so you can make comparisons (see Booklet 2: Running (pdf), for detailed tips on the correct technique).

Sit-and-reach test

Sit on the floor with your legs outstretched, bare feet flexed and against a wall, 8-12 inches apart. Reach forward, fingertips sliding along the floor, and mark the furthest point that you can maintain for three seconds. (if you haven't got someone who can mark the spot for you, roll a pencil along the floor with your fingertips.) Ensure that your legs remain straight and flat on the floor - and don't bounce or jerk to get a better reading. Measure the distance from the wall to your marker to give you this test result.

Waist-to-hip ratio

Your waist to hip ratio is a strong indicator of whether your body weight is healthy. You can work this out by dividing the measurement of your waist in cm by that of your hips in cm. Measure your waist at its narrowest point - usually around your navel. Next, measure your hips at their widest point - usually around the buttocks. Don't pull the tape too tight when doing either of these measurements!

Men A ratio of 0.90 or under is desirable

Women 0.85 or under is desirable

Body mass index (BMI)

This is another tool for assessing body weight, using your weight and height. To work out your BMI, divide your weight in kilograms by your height in metres, then divide this answer by your height again.

· A BMI less than 18.5 indicates you are underweight

· Between 18.5 and 25 indicates a healthy weight

· Between 25 and 30 suggests you are over your ideal weight

· Between 30 and 35 is an indicator of being significantly overweight

Cooling down

Finally, follow the army's recommended cool-down exercises

Warning: Please check with your doctor before beginning this or any other strenuous exercise regime

Week 1

Day 1

Walk-jog for 20 minutes (jog for 2min, walk for 2min, etc)

1 x press-up max score

2 x 5 dorsal raises

2 x 5 tricep dips

1 x sit-up max score

Rest 30-90sec between sets

Day 2

Rest day

Day 3

10-minute warm-up

Run fast for 30sec, rest for 2 minutes, repeat 5 times

10-minute cool-down

Day 4

Rest day

Day 5

Walk-jog for 20 minutes (walk for 1min, jog for 3min, repeat 5 times)

1 x press-up max

1 x 5 dorsal raises

1 x 5 tricep dips

1 x sit-up max

Day 6

Rest day

Day 7

Brisk walk for 20-30 minutes or go swimming, cycling or rowing for 15-20min

Week 2

Day 1

Walk-jog for 20 minutes (walk for 1min, jog for 3min, etc)

2 x press-up max

2 x 6 dorsal raises

2 x 6 tricep dips

2 x sit-up max

Rest 30-90sec between sets

Day 2

Rest day

Day 3

10-minute warm-up

Run fast for 40 sec, rest for 2 minutes, repeat 5 times

10-minute cool-down

Day 4

Rest day

Day 5

Walk-jog for 20 minutes (jog for 4min, walk for 1min, repeat 4 times)

2 x press-up max

2 x 6 dorsal raises

2 x 6 tricep dips

2 x sit-up max

Day 6

Rest day

Day 7

Brisk walk for 20-30 minutes or go swimming, cycling or rowing for 15-20min

Week 3

Day 1

Jog for 20 minutes (jog for 5min, rest for 1min, etc)

3 x 1/4 press-up max

2 x 7 dorsal raises

2 x 7 tricep dips

3 x 1/2 sit-up max

Rest 30-90sec between sets

Day 2

Rest day

Day 3

10-minute warm-up

Run fast for 1 minute, run slowly for 2min, repeat 5 times

10-minute cool-down

Day 4

Rest day

Day 5

Walk-jog for 15 minutes

3 x press-up max

2 x 7 dorsal raises

2 x 7 tricep dips

3 x sit-up max

Day 6

Rest day

Day 7

Brisk walk for 25-35 minutes or go swimming, cycling or rowing for 15-25min

Week 4

Day 1

Jog for 15 minutes

3 x 1/3 press-up max

2 x 8 dorsal raises

2 x 8 tricep dips

3 x 1/3 sit-up max

Rest 30-90sec between sets

Day 2

Rest day

Day 3

10-minute warm-up

Run fast for 1 minutes, run slowly for 1min, repeat 5 times

10-minute cool-down

Day 4

Rest day

Day 5

Brisk walk for 25-35 minutes or go swimming, cycling or rowing for 15-25min

Day 6

Rest day

Day 7: fitness assessment

Press-ups for 2 minutes to establish new max score

Sit-ups for 2min to establish new max score

1.5-mile timed run

Level 2

Week 5

Day 1

Steady run for 18 minutes

3 x press-up max

3 x 8 squats

3 x sit-up max

3 x 8 dorsal raises

Rest 30-90sec between sets

Day 2

Rest day

Day 3

10-15 minute warm-up

Run hard for 1 minute, recover for 1 min, repeat for 10min

10-minute cool-down

Day 4

Rest day

Day 5

10-minute warm-up

Circuit training: 2 x 12 of each exercise (see below for list)

10-minute cool-down

Day 6

Rest day

Day 7

Brisk walk for 30-40 minutes or go swimming, cycling or rowing for 15-20min

Week 6

Day 1

Steady run for 20 minutes

3 x press-up max

3 x 10 lunges

3 x sit-up max

3 x 8 dorsal raises

Rest 30-90sec between sets

Day 2

Rest day

Day 3

10-15 minute warm-up

Run hard for 1 minute, recover for 1 min, continue for 10min

10-minute cool-down

Day 4

Rest day

Day 5

10-minute warm-up

Circuit training: 2 x 12 of each exercise (see below for list)

10-minute cool-down

Day 6

Rest day

Day 7

Brisk walk for 30-40 minutes or go swimming, cycling or rowing for 20-25min

Week 7

Day 1

Steady run for 20 minutes

3 x press-up max

3 x 12 squats

3 x sit-up max

3 x 12 dorsal raises

Rest 30-90sec between sets

Day 2

Rest day

Day 3

10-15 minute warm-up

Run hard for 1 minute, recover for 1 min, continue for 12min

10-minute cool-down

Day 4

Rest day

Day 5

10-minute warm-up

Circuit training: 3 x 12 of each exercise (see below for list)

10-minute cool-down

Day 6

Rest day

Day 7

Brisk walk for 30-40 minutes or go swimming, cycling or rowing for 20-25min

Week 8

Day 1

Steady run for 25-30 minutes

3 x press-up max

3 x14 lunges

3 x sit-up max

3 x 14 dorsal raises

Rest 30-90sec between sets

Day 2

Rest day

Day 3

10-15 minute warm-up

Run hard for 1 minute, recover for 1 min, continue for 12min

10-minute cool-down

Day 4

Rest day

Day 5

10-minute warm-up

Brisk walk-run for 30-40 minutes or go swimming, cycling or rowing for 30-40min

10-minute cool-down

Day 6

Rest day

Day 7: fitness assessment

Press-ups for 2 minutes to establish new max score

Sit-ups for 2min to establish new max score

1.5-mile timed run

Level 3

Week 9

Day 1

Steady run for 25-30 minutes

4 x press-up max

4 x 12 squats

4 x sit-up max

4 x 12 dorsal raises

Rest 30-90sec between sets

Day 2

Rest day

Day 3

10-15 minute warm-up

Run hard for 1 minute, recover for 1 min, continue for 14min

10-minute cool-down

Day 4

Rest day

Day 5

10-minute warm-up

Circuit training: 3 x 15 of each exercise (see below for list)

10-minute cool-down

Day 6

Rest day

Day 7

Brisk walk for 30-40 minutes or go swimming, cycling or rowing for 20-25min

Week 10

Day 1

Steady run for 25-30 minutes

4 x press-up max

4 x 14 lunges

4 x sit-up max

4 x 14 dorsal raises

Rest 30-90sec between sets

Day 2

Rest day

Day 3

10-15 minute warm-up

Run hard for 1 minute, recover for 1 min, continue for 14min

10-minute cool-down

Day 4

Rest day

Day 5

10-minute warm-up

Circuit training: 3 x 15 of each exercise (see below for list)

10-minute cool-down

Day 6

Rest day

Day 7

Brisk walk for 30-40 minutes or go swimming, cycling or rowing for 25-30min

Week 11

Day 1

Steady run for 25-30 minutes

4 x 20 chin-ups

4 x 16 squats

4 x sit-up max

4 x 16 dorsal raises

Rest 30-90sec between sets

Day 2

Rest day

Day 3

10-15 minute warm-up

Run hard for 1 minute, recover for 1 min, continue for 16min

10-minute cool-down

Day 4

Rest day

Day 5

10-minute warm-up

Circuit training: 3 x 20 of each exercise (see below for list)

10-minute cool-down

Day 6

Rest day

Day 7

Brisk walk for 30-40 minutes or go swimming, cycling or rowing for 20-25min

Week 12

Day 1

Steady run for 25-30 minutes

4 x press-up max

4 x 18 lunges

4 x sit-up max

4 x 18 dorsal raises

4 x 12 triceps dips

Rest 30-90sec between sets

Day 2

Rest day

Day 3

10-15 minute warm-up

Run hard for 1 minute, recover for 1 min, continue for 16min

10-minute cool-down

Day 4

Rest day

Day 5

10-minute warm-up

Brisk walk/run for 30-40 minutes or go swimming, cycling or rowing for 30-40min

10-minute cool-down

Day 6

Rest day

Day 7: fitness assessment

Press-ups for 2 minutes to establish new max score

Sit-ups for 2 minutes to establish new max score

1.5-mile timed run

Level 4

Week 13

Day 1

Steady run for 30-40 minutes

2 x press-ups for 45sec

4 x 15 squats

2 x sit-ups for 45sec

4 x 15 dorsal raises

Rest 30-90sec between sets

Day 2

Rest day

Day 3

10-15 minute warm-up

Alternate runing hard, then recovering, for intervals of 1,2 and 3 minutes (12min in total)

10-minute cool-down

Day 4

Rest day

Day 5

10-minute warm-up

Circuit training: 4 x 15-20 of each exercise (see below for list)

10-minute cool-down

Day 6

Rest day

Day 7

Brisk walk for 30-40 minutes or go swimming, cycling or rowing for 25-35min

Week 14

Day 1

Steady run for 30-40 minutes

2 x press-ups for 45sec

4 x 15 lunges

2 x sit-ups for 45sec

4 x 15 dorsal raises

Rest 30-90sec between sets

Day 2

Rest day

Day 3

10-15 minute warm-up

Alternate running hard, then recovering, for intervals of 1,2 and 3 minutes

10-minute cool-down

Day 4

Rest day

Day 5

10-minute warm-up

Circuit training: 4 x 15-20 of each exercise (see below for list)

10-minute cool-down

Day 6

Rest day

Day 7

Brisk walk for 30-40 minutes or go swimming, cycling or rowing for 30-35min

Week 15

Day 1

Steady run for 30-40 minutes

2 x press-ups for 1min

4 x 20 squats

2 x sit-ups for 1min

4 x 20 dorsal raises

4 x 12 triceps dips

Rest 30-90sec between sets

Day 2

Rest day

Day 3

10-15 minute warm-up

Alternate running hard, then recovering, for intervals of 1,2,3,2 and 1 minute (18min in total)

10-minute cool-down

Day 4

Rest day

Day 5

10-minute warm-up

Circuit training: 4 x 15-20 of each exercise (see below for list)

10-minute cool-down

Day 6

Rest day

Day 7

Brisk walk for 30-40 minutes or go swimming, cycling or rowing for 30-40min

Week 16

Day 1

Steady run for 30-40 minutes

2 x press-ups for 1min

4 x 20 squats

2 x sit-ups for 1min

4 x 20 dorsal raises

4 x 12 chin-ups

Rest 30-90sec between sets

Day 2

Rest day

Day 3

10-15 minute warm-up

Alternate running hard, then recovering, for intervals of 1,2,3,2 and 1 minute

10-minute cool-down

Day 4

Rest day

Day 5

10-minute warm-up

Brisk walk/run for 30-40 minutes or go swimming, cycling or rowing for 30-40min

10-minute cool-down

Day 6

Rest day

Day 7: fitness assessment

Press-ups for 2 minutes to establish new max score

Sit-ups for 2min to establish new max score

1.5-mile timed run

Circuit training exercises

Do the number of repetitions of each exercise advised by the 16-week planner, without a break and in order. Once you've completed one circuit, rest for 2-3 minutes before starting the next. Each exercise is explained in the relevant booklet (all booklets will be available to download here by the end of the week).

1 Press-up

2 Twist sit-up

3 Step-up with knee raise

4 Triceps dip

5 Walking lunge

6 Sit-up

7 One-legged squat

8 Dorsal raise

Note: If "level 1, week 1" of the programme seems too easy for you, feel free to skip a week or even a level. Equally, if a week ever feels too challenging, simply do what you can and repeat the week, rather than moving on to the next one.

Source The official British army fitness programme | Health & wellbeing | The Guardian